· Quant · Articles · 6 min read

Back To The Static

In the beginning, the web was static. Join Quant as we journey back to the days of Netscape Navigator and dial up modems and rediscover the pros of serving static websites instead of complex web serving architectures.

In the beginning, the web was static. Join Quant as we journey back to the days of Netscape Navigator and dial up modems and rediscover the pros of serving static websites instead of complex web serving architectures.

Preamble

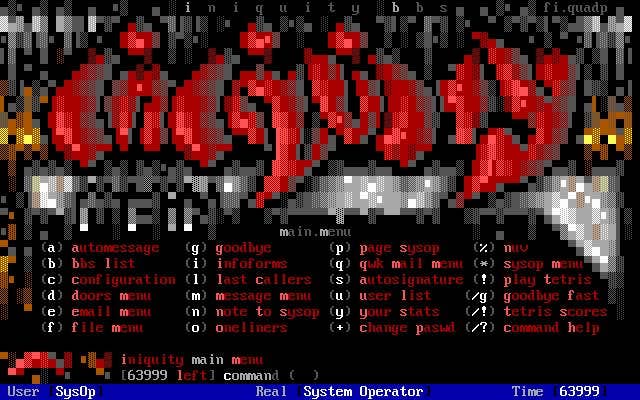

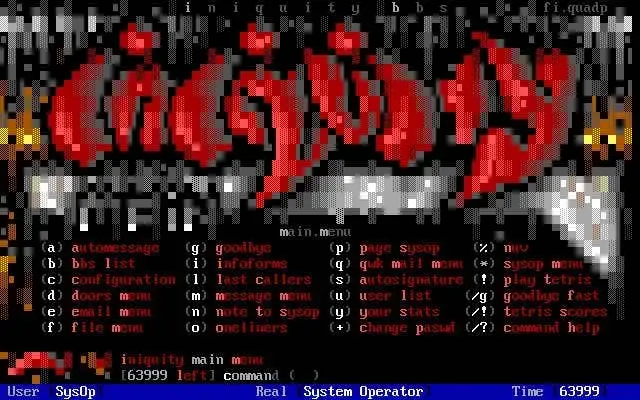

The internet has a long and storied past. From the days of ARPANET, BBS, IRC and the founding of the World Wide Web we’ve come a long way. I was around for most of the journey, spending way too much time playing LORD on local BBSes, and eventually running my own as a kid (called Den of Iniquity - which is… wow. Context lost on a 10 year old). I started my first company (GettaSite! Before Your Competitors Do™) in high school, building websites during the golden era. I’ve been building ever since.

From BBS to the first internet browser and beyond…

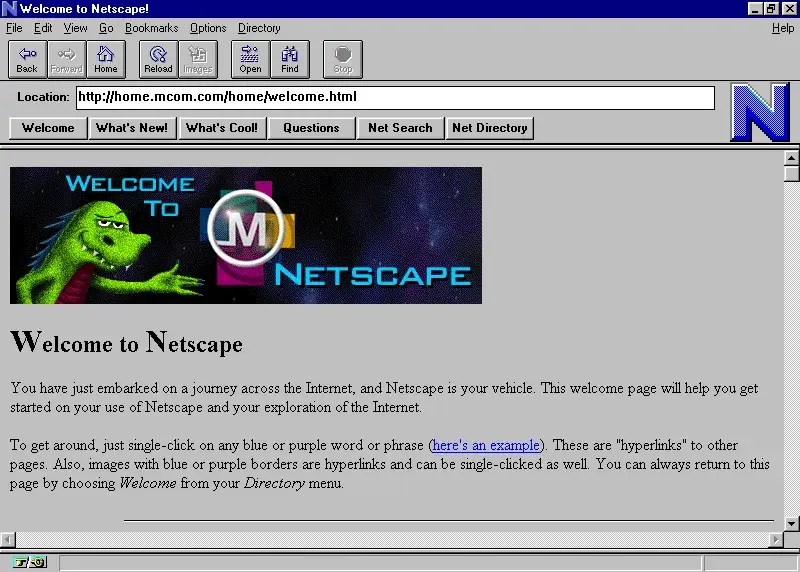

The early days were simple. Static, no-fuss websites generated using notepad and served over non-encrypted protocols to anyone lucky enough to stumble upon a functional domain. As the internet moved from a “flash in the pan” to something much more meaningful, things evolved at a rapid pace. As we added additional layers to provide scalability, performance, security and development flexibility, we moved further away from the simple beginnings and further into an ever-evolving, complex landscape of virtualization, cloud providers, content management systems, backend and frontend frameworks, and autoscaling serving architectures. Things became more complex, more expensive, and more prone to break.

The future sees us move back to lean, secure, static serving while maintaining the benefits we’ve discovered along the way.

Where it all began

![]()

In the beginning, the web was static. We used insecure FTP to ship images, markup, and CSS, and served it directly to our tiny audiences connected via 33.6k modems and paying by the hour. They watched with delight as content trickled in, images appearing in chunks, gifs by the dozen, and background midis blaring through small speakers. Our webservers hummed along, simply there to pass files along to clients.

Small companies, forward thinking enough, joined the movement and paid absurd fees to have their websites built, all of which contained exactly 4 pages and had a navbar that looked like this:

ABOUT | SERVICES | LOCATION | CONTACT

Sometimes they had a crudely sketched map on the contact page, most of the time they did not.

![]()

In 1995, Geocities launched, when there were only a few million people online globally. A whole universe of gaudy sites launched, all in a perpetual state of construction.

It started an era of simple interface driven user generated content. It began free expression on the internet, and seeded the early ideas that turned into blog posts and social media networks.

We got ambitious

In the late 90s and early 2000s, the internet became more than a flash in a pan. Enterprise began to take note; dynamic server-side rendering, encryption, and Javascript were normalized. Open source Content Management Systems (CMS) such as Drupal, WordPress, and Joomla were introduced and managing web content became a self-service affair. Netscape and Microsoft agreed to abolish MARQUEE and BLINK tags. The world felt like a safer place.

… Then we suffered through our first ever DoS attack. The normally-nice Canadians let one of their bad boys loose, who in turn brought Amazon and eBay to their knees. The FBI estimated $1.7bn in damages; nobody was prepared for operating at scale, and governments started to take note. These events started raising questions of national security. Perhaps we should think this through.

In our haste to build the all-singing, all-dancing web, we had introduced major burdens on our underlying infrastructure. Webservers were no longer simply responsible for dishing up static assets; they actually needed to do stuff. Authentication, session management, database driven backends, file management and dynamic page rendering all made our lean servers much, much heavier. Each additional layer made us more prone to attack, and in the early days security was often a second thought.

We adapted, at a cost

Scalable hosting architecture became critical to supporting the ever growing needs of enterprise. We found ways of running our file & web servers, databases, and caching layers with replication, to increase performance at scale and resilience against failure.

We added CDN layers to prevent hordes of ravenous users from accessing cacheable content directly from our servers, ensuring regional offload to minimize latency. We added WAF layers to protect our servers in a perpetual game of cat and mouse. We added bot detection, heuristics, and machine learning to prevent overzealous robots from stealing our content.

AWS, Azure, Google Cloud, and others burst onto the scene and abstracted a lot of the pain, by providing a simple UI to spin up common distributed services. Organizations removed their own racks of physical machines and moved to “the cloud” (which were also racks of physical machines).

Autoscaling technology, containerization, self-healing, Kubernetes. We’ve reached the peak.

The future is +

The future sees us return to our roots, in some ways. Wherever possible we will remove the complexity from the modern serving stack and go back to serving static assets directly to our end users. This modern static movement is already underway, with Netlify being early proponents of the shift back to a modern static web. They coined and started the Jamstack movement, which refers to the JavaScript, APIs, and Markup that make up a modern static solution. Markup encompasses the static assets (HTML, CSS, media assets) we serve, where JavaScript and APIs are used for dynamic content and more complex requirements via microservices.

With Jamstack, we remove the heavy dependencies required to serve content. We build static representations of our content once, and only re-render when it changes. The content served to our site visitors comes from a globally-distributed edge, completely decoupled from our origin servers. Dynamic content is handled via microservices and APIs.

I have been a fan of Jamstack since its infancy; it represents everything I think the web should be… but couldn’t help wonder if we could improve things even further. Current solutions (commonly known as Static Site Generators) require some level of re-architecture of your existing website, usually a major overhaul or rebuild. For those that have invested heavily in their existing web presence, it represents a significant challenge. I wanted to find a way to bring the benefits of the modern static web to everyone, and thus QuantCDN was born.

QuantCDN is a Jamstack-friendly solution that takes the static movement further, lowering the barrier to entry by providing turnkey solutions for CMSes such as WordPress and Drupal (with more to come). We have native support for the most complex requirements (incremental builds/content change, content creation & editing directly at edge, automated revisioning, rollback, to-the-second scheduled publishing, search, forms, and more). Enable a CMS module, click a button, and you’re enjoying the benefits without the need to rebuild.

The future is lighter, leaner, faster, more secure, and it costs less to boot. Join the movement today.